The State of Trustworthy AI Policy - Part 1 of 2

With my colleague, Erik Lee, I had the great privilege to speak at the Information Architecture Conference in Seattle (at the beautiful Seattle Public Central Library) in April of last year. The topic of the presentation, titled "Beware of Glorbo: A Case Study and Survey of the Fight Against Misinformation" was about AI Data Poisoning (now also known as Prompt or Context Injection), but there was a section where I summarized the state of AI Data Policy, as I understood it then. People told me that the mental models I provided were helpful for getting bearings on the specific terms surrounding AI policy.

In light of this feedback, I thought it would be good to revisit this talk ahead of an update I'm giving later this year. But first, let's view that state of AI policy terms in April of 2024:

My deck showed the nebulous state of popular AI policy terms that were being thrown around. The term names are not intuitively descriptive and the relationships between them is unclear, especially when sloppy marketing jargon would obscure their meanings as technical terms of art.

We start by setting definitions. Terms that were conceptually identical have been grouped.

-

Explainable/Transparent AI – AI that can explain the reasoning behind its output

-

Robust AI – AI that is technically robust: (consistent, accurate and secure)

-

Ethical/Responsible AI – AI that is inclusive, non-discriminatory, fair – may even have environmental considerations

-

Trustworthy AI – AI that encompasses the above principles: safe, secure, consistent and accountable to enable trust in the AI output

Strong AI – AI that is aware of concepts, its own reasoning and itself as an independent agent

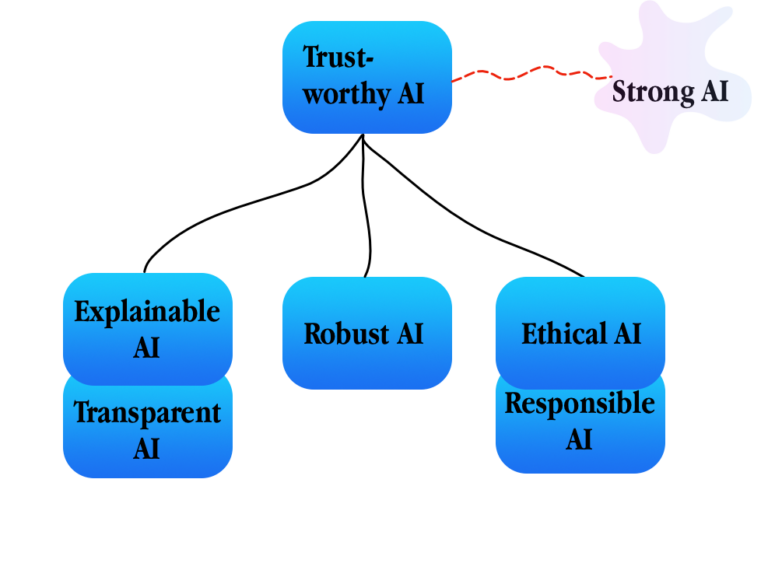

Using these definitions, I drew a diagram to help people visualize the state of these terms.

In the diagram, I placed Trustworthy AI as a superset concept that includes each of the other AI policy concepts (explainable/transparent AI, robust AI, ethical/responsible AI) within it. Strong AI (now more commonly referred to as Advanced General Intelligence (or AGI) is disconnected since it is only theoretical.

This model is imperfect as these policies often overlap and share goals, definitions and desired outcomes. I found, however, thinking of each of these policies as contributing to the larger goal of Trustworthy AI to be a helpful way of understanding each of these policies and how the relate to each other.

In addition to defining and contextualizing these AI policies to one another, I also profiled the organizations making the most waves in these spaces and what had been published and legislated up to that point.

The heavy hitters that I had found were:

- European Commission High-level expert group on artificial intelligence – founded in 2018, published first ethics guidelines on Trustworthy AI in 2019

- International Telecommunication Union (ITU) a UN agency – published their Trustworthy AI Framework in 2020 as part of the UN's AI for Good program.

- NIST (National Institute of Standards and Technology) – Published Trustworthy AI: Managing the Risks of Artificial Intelligence in 2022

- NSF (National Science Foundation) – Launched the Trustworthy AI Initiative in 2023

Additionally, I noted some movement in the Executive and Legislative branches of the United States government at that time.

- Select White House Committee on Artificial Intelligence Released the NATIONAL ARTIFICIAL INTELLIGENCE RESEARCH AND DEVELOPMENT STRATEGIC PLAN 2023 UPDATE in May 2023

- United States House Bipartisan Task Force on Artificial Intelligence in February 2024

- The United State Senate had exploratory hearings on AI back in the summer of 2023.

Now, nearly a year later what has changed? A lot, as you can all imagine.

I will speak about this at DGIQ West 2025 in a talk titled "Catching Up with Glorbo: Combatting AI Data Poisoning with RAG Frameworks". You won't have to wait until May, as I plan to write about this in Part 2 ahead of the conference. In the meantime, here are some highlights include:

- The proliferation of "Open" AI models and frameworks via repositories like HuggingFace and Ollama

- The impact of model distillation techniques (such as DeepSeek) on AI policy, particularly Ethical/Responsible AI policy

- How the ongoing evisceration of United States Federal agencies by the second Trump Administration is stuntinRg US leadership on AI policy

- The continuing forward movement of AI policy in places such as the EU

Thank you to everyone who has encouraged me to continue write and speak about this subject. Stay tuned for part two. Please don't hesitate to reach out to me with helpful feedback (that includes corrections). 🙂 See you soon in Part 2.

- ← Previous

On Launching a Blog in 2024 - Next →

Emerging from Despair

Built with Eleventy and System.css